Worksona Delegator: A Pattern Library for Agent Coordination Topologies

#worksona#delegation#patterns#distributed-systems#akka#orchestration

David Olsson

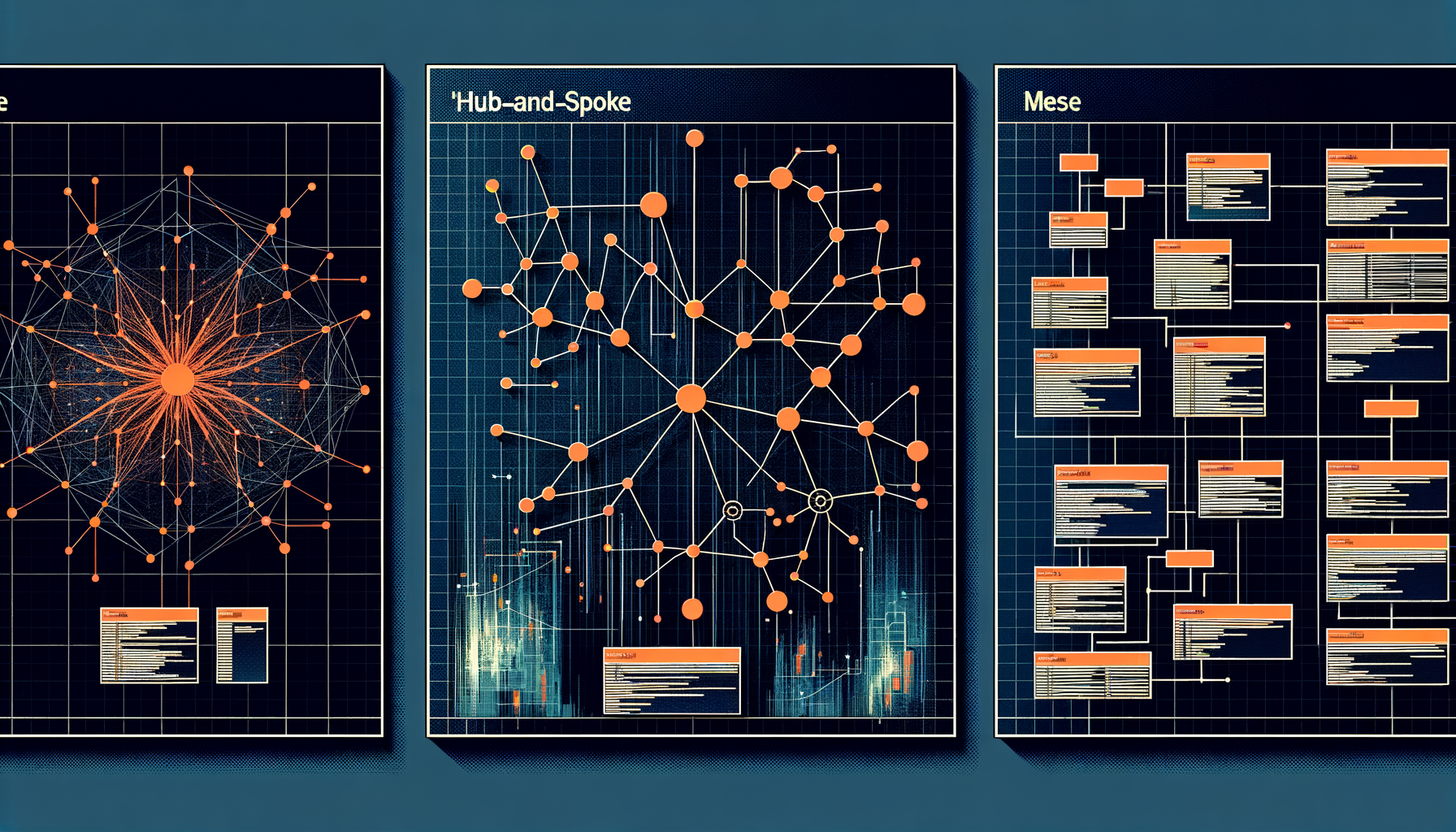

The Worksona Delegator documentation library defines ten delegation patterns for coordinating agents in complex work systems. Each pattern carries a formal specification: when to use it, what structural constraints it satisfies, and how it behaves under failure conditions.

A five-gate decision flow guides selection between patterns based on task properties. A seven-dimension complexity scoring engine quantifies task characteristics — parallelism, state coupling, failure sensitivity, coordination depth, latency tolerance, domain homogeneity, and output convergence — and recommends the coordination topology that satisfies the task's structural requirements. The library also defines the Manifesting Component pattern, which enables agents to deploy themselves: an agent that carries its own deployment manifest can provision the resources it needs when instantiated.

Why is it useful?

Agent coordination is the unsolved problem in multi-agent systems at scale. Most implementations select a coordination pattern intuitively and discover its failure modes in production. The Delegator library makes the choice explicit and auditable. Given a task's complexity profile, the scoring engine narrows the candidate patterns to those that satisfy the task's structural constraints.

The five-gate decision flow is sequential and eliminative. Each gate removes patterns that cannot satisfy a required property, so the output is always a valid option set rather than a ranking that includes inappropriate choices. This matters in production design work: we want to know which patterns are disqualified and why, not just which pattern scored highest on an abstract metric.

The seven scoring dimensions are defined precisely enough that two architects scoring the same task independently should produce profiles within a narrow range of each other. That reproducibility is what makes the library useful as a shared design language rather than a collection of guidelines.

How and where does it apply?

The library is a design-time reference for Worksona agent architects. When specifying a new multi-agent workflow, we run the complexity scoring engine first to characterize the task, then run the gate flow to produce the recommended pattern or valid option set.

The ten patterns cover the coordination topologies we encounter in practice: Sequential Pipeline, Parallel Fan-Out, Supervised Pool, Event-Driven Mesh, Hierarchical Delegation, Consensus Ring, and four specialized variants. The Manifesting Component pattern is specific to the Akka Agent Generator context: an agent that includes its own deployment manifest can provision itself when instantiated by the generator, removing the manual deployment configuration step from the round-trip.

The gate flow below captures the four most common outcomes from the first three gates. The full flow extends to all five gates, but these four branches account for the majority of coordination decisions we encounter.

The complexity profile interface below is the data structure that moves between the scoring engine and the gate flow. Each dimension is a normalized float between 0 and 1. The selectPattern function shown here covers the first three gates; the full implementation extends the conditional chain to cover all ten patterns.

interface ComplexityProfile {

parallelism: number; // 0–1: can tasks run concurrently?

stateCoupling: number; // 0–1: do agents share mutable state?

failureSensitivity: number; // 0–1: does one failure block all?

coordinationDepth: number; // 0–1: how deep is the delegation chain?

latencyTolerance: number; // 0–1: can coordination overhead be absorbed?

domainHomogeneity: number; // 0–1: are all subtasks the same type?

outputConvergence: number; // 0–1: must results be merged?

}

function selectPattern(profile: ComplexityProfile): DelegationPattern {

if (profile.parallelism < 0.3) return 'sequential-pipeline';

if (profile.stateCoupling > 0.7) return 'supervised-pool';

if (profile.failureSensitivity > 0.8) return 'consensus-ring';

return 'parallel-fan-out';

}

The threshold values (0.3, 0.7, 0.8) are calibrated against the agent workflows we have built and observed in production. They are not fixed constants — the library documents how to adjust thresholds when the default calibration does not match a team's specific risk and latency profile.