The Delegator Evolution: Five Versions of a Multi-Agent Pattern

#worksona#portfolio#delegator#multi-agent#orchestration#iteration

David Olsson

The delegator is the load-bearing idea in Worksona. A coordinator receives a query, decomposes it into subtasks, dispatches those subtasks to specialized worker agents, and synthesizes the results into a final output. That sentence describes every version. What changed across five iterations was everything else: how patterns were represented, how agents were selected, how responses were structured, and how results were reported.

This post traces that evolution. Each version solved a real problem. None of it was speculative.

What is the delegator pattern?

The delegator is a coordinator-worker architecture for language models. The coordinator — the delegator agent — receives a user query and decides which specialized agents need to work on it: a researcher, an analyst, a writer, a critic, a synthesizer. Each worker executes its subtask in its own context. Results flow back to the coordinator, which compiles a final report. The user sees one coherent output from what was actually parallel work by several agents.

Why build it this way?

A single LLM call handles many tasks well. It handles complex, multi-domain analysis poorly — not because models lack capability, but because mixing roles in one context produces averaged, unfocused output. Separating concerns across dedicated agents produces sharper work: a researcher with a low temperature focused on accuracy, a writer with a higher temperature permitted to be expressive, a critic with instructions to find fault. The pattern enforces specialization.

The architectural evolution across five versions

Version 1 established the baseline. A single delegator agent in a browser-based interface analyzed user queries, spawned worker agents dynamically, and compiled a final report. The architecture was a monolith: a small number of JavaScript files, API keys stored in localStorage, agents defined inline. The core loop was proved.

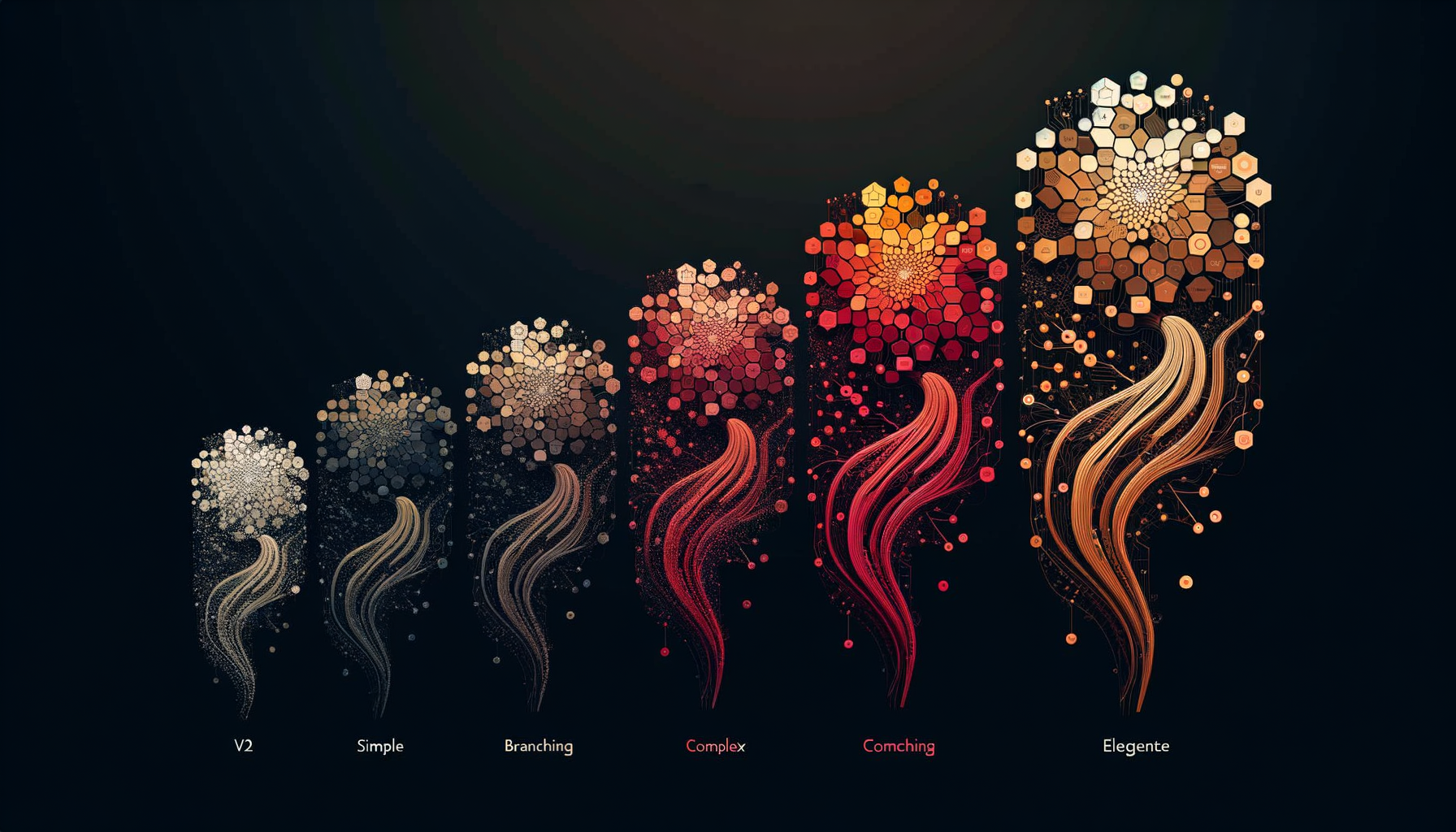

Version 2 formalized the pattern library. We introduced six named delegation strategies — Hierarchical, Peer-to-Peer, Iterative Refinement, Competitive Evaluation, Hierarchical Federation, and Dynamic Adaptation — each implemented as a class following the Strategy Pattern. Agents moved out of inline definitions into JSON files. A state manager singleton tracked execution. Mermaid sequence diagrams were auto-generated after each run. This was the version where the architecture became legible.

// v2: Six strategies registered at runtime, swappable without restart

const strategy = delegationRegistry.getStrategy('competitive-evaluation');

const result = await strategy.execute(query, agentPool);

Version 3 tackled reliability. Agent responses were free-form text up to this point, which meant the coordinator had to parse and interpret them — an error-prone step. Version 3 introduced a JSON-RPC communication layer: agents were prompted to return structured JSON conforming to a defined schema, with findings, insights, recommendations, confidence levels, and metadata. Parsing failures triggered automatic retry with enhanced prompts before falling back to text extraction. Inter-agent context passing improved: instead of forwarding raw text between agents, each agent received structured summaries of what previous agents had found.

{

"jsonrpc": "2.0",

"result": {

"analysis": {

"summary": "...",

"findings": [{ "category": "...", "confidence": "high", "evidence": ["..."] }]

},

"recommendations": [{ "title": "...", "rationale": "...", "priority": "high" }],

"metadata": { "processing_time_ms": 1420, "validation_status": "validated" }

}

}

Version 4 addressed the debt that had accumulated in the file structure. Ten JavaScript files had grown around the core — each an incremental patch. Version 4 consolidated them into three: worksona.js (the LLM abstraction library), worksona-enhanced.js, and a single app.js with four clearly delineated sections. This reduced the script tag count from ten to three and made the load chain predictable. The delegator logic was also extracted into a delegation-core/ directory for distribution.

Version 5 focused on output quality. Earlier versions produced structurally valid reports that were nonetheless fragmented — each agent's section read as isolated analysis rather than a developing argument. Version 5 introduced a narrative reporting framework: each agent was assigned a story role (researcher provides context, analyst provides patterns, strategist provides implications, writer provides action steps, critic provides risk assessment), and agents were explicitly instructed to reference and build on previous agents' output. The result was reports that read as coherent business cases rather than collections of parallel summaries.

Where the pattern stands today

Five versions of the same core idea produced a system that: selects delegation patterns automatically based on a multi-factor complexity score; communicates with agents over a structured schema with retry logic; passes accumulated findings as context between agents in sequence; and produces reports with narrative continuity across sections.

The delegator is now the engine inside both the MCP server and the CLI. Those are the next two posts.